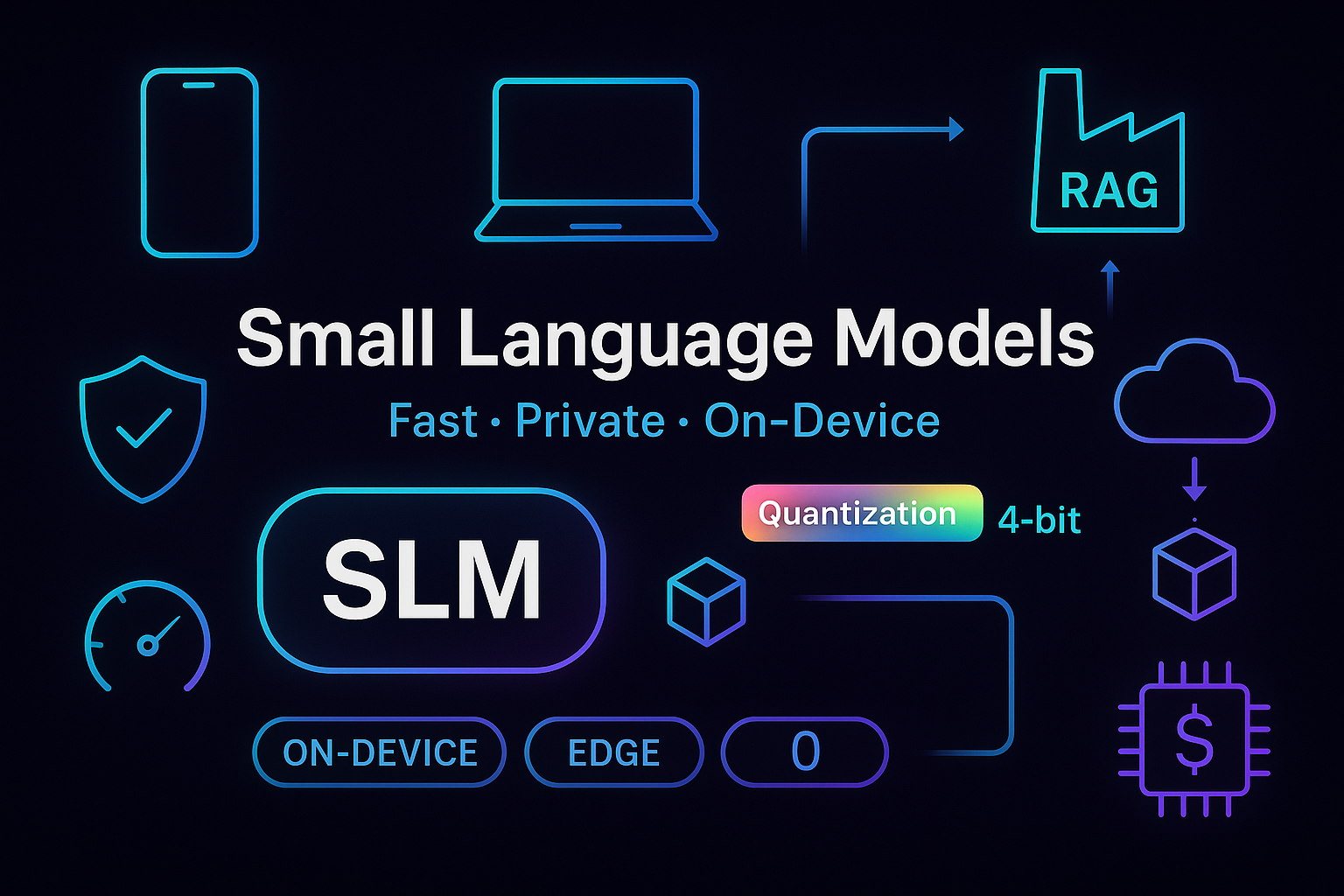

Small Language Models: Fast, Private Edge AI

Small Language Models (SLMs): Why Tiny AI Is the Next Big Thing in On‑Device and Edge Intelligence

Small Language Models (SLMs) are compact neural networks—typically in the 1B–15B parameter range—designed to deliver high-utility language capabilities with minimal compute, memory, and energy. Unlike giant LLMs optimized for broad generality, SLMs excel at focused, contextual tasks with low latency, strong privacy, and dramatically lower cost. Thanks to advances in quantization, distillation, retrieval-augmented generation (RAG), and tool use, “tiny” AI can now power chat, summarization, classification, agentic workflows, and even code assistance directly on edge devices or modest servers. The result? Faster responses, offline resilience, and a better total cost of ownership (TCO). If you’re building practical AI that ships, SLMs transform feasibility into reality—without sacrificing quality where it matters.

What Makes SLMs Different—and Why They’re Surging Now

SLMs trade raw breadth for precision and efficiency. They handle well-defined use cases—product Q&A, form autofill, log triage, policy checks—while staying small enough to run on CPUs, mobile NPUs, or low-end GPUs. Because context windows and parameter counts are constrained, SLMs encourage tight task design and structured inputs/outputs, which often improves reliability. For many day-to-day workflows, this “right-sized” approach beats sending everything to a multi-hundred-billion-parameter model.

The timing is perfect. Hardware acceleration is proliferating in laptops, phones, and edge gateways; frameworks now support 8-bit/4-bit inference; and enterprise data stacks increasingly favor retrieval-first architectures. Meanwhile, budgets are tightening. SLMs slash inference costs, reduce egress fees, and minimize vendor lock-in. In short: they unlock production-grade AI at operational scale without spiraling bills or privacy trade-offs.

Core Technical Enablers: From Compression to Retrieval and Tools

Modern SLMs punch above their weight because of a layered toolbox. Quantization (8-bit to 4-bit) compresses weights with minimal quality loss when paired with quantization-aware training. Pruning removes redundant neurons and heads, improving throughput. Distillation transfers knowledge from larger teacher models, preserving instruction-following and safety behaviors in a smaller student. Lightweight fine-tuning—LoRA or adapters—lets teams specialize models without full retrains.

Beyond the base model, RAG (retrieval-augmented generation) injects fresh, authoritative context at inference time, constraining hallucinations and keeping outputs current. Tool use and function calling let SLMs delegate: they call APIs, run SQL, or trigger automations, effectively “growing” capability without growing parameters. Optimizations like KV-caching, speculative decoding, and batching further reduce latency and cost, enabling snappy experiences even on commodity hardware.

Put simply, the winning formula is: a small, well-tuned base + sharp retrieval + judicious tools + strong prompting. That stack narrows the gap with massive LLMs for many scoped tasks—while remaining private, fast, and predictable.

High-Impact Use Cases and ROI You Can Quantify

Where do SLMs shine? Anywhere latency, privacy, and cost matter more than maximal generality. Consider:

- On-device copilots: Email drafting, calendar triage, note summarization, and accessibility features running offline for instant UX and strong privacy.

- Field/industrial edge: Log parsing, fault summaries, SOP guidance, and safety checklists on ruggedized gateways without cloud dependency.

- Customer operations: Policy-grounded chat, claim intake validation, templated responses, and real-time form assistance with deterministic guardrails.

- Developer productivity: Repo-scoped code suggestions, commit message generation, and issue summarization without sending IP off-prem.

The economics are compelling. SLMs reduce per-request spend, shrink egress, and unlock horizontal scaling on affordable hardware. Teams report measurable gains across key metrics: faster first-token latency, higher throughput per dollar, improved data residency compliance, and fewer escalations due to tighter guardrails with domain RAG. When a task is well-bounded, the ROI of “small and specialized” beats “big and generic.”

Deployment Patterns and Architecture Blueprints

Choosing an SLM is only half the story; the architecture determines outcomes. Common patterns include:

- On-device inference: Mobile/desktop NPU or CPU with 4–8 bit models for micro-copilots, privacy-first scenarios, and offline UX.

- Edge gateways: Small GPUs or efficient CPUs hosting shared SLMs that serve local sensors, kiosks, or retail terminals.

- Hybrid RAG: Local SLM for reasoning; a vector store (on-prem or VPC) for retrieval; optional fallback to a larger hosted model for out-of-scope queries.

- Serverless or autoscaled pods: Burst capacity for peak loads with request-level caching and orchestration.

Architect for determinism and safety: use structured prompts, schema-constrained outputs (JSON), and function calling for high-stakes actions. Implement guardrails and policy checks pre- and post-generation. Exploit caching—prompt, vector, and results—to curb cost. Use embeddings tuned for your domain to boost retrieval precision, and shard indexes by tenant or geography to meet compliance.

Operationally, treat SLMs like any production service. Add observability (latency, cost, error distribution), evaluation (task-specific metrics, red-teaming), versioning (canary deploys), and rollbacks. Maintain a fallback chain: SLM → larger model → human review, with clear confidence and escalation policies.

Limits, Risks, and How to Mitigate Them

SLMs can still hallucinate, misread ambiguous prompts, or falter on open-domain queries. The fix isn’t always “use a bigger model.” Instead, tighten scope and engineer the task: authoritative retrieval, schema validation, and rejection sampling. For critical workflows, require source citations and confidence signals. When a request is out-of-policy or low confidence, fail safe—defer to a larger model or a human.

Domain adaptation is another pitfall. Generic SLMs may underperform without careful fine-tuning and curation. Start with high-quality instruction data and enforce style/terminology with system prompts and examples. Test adversarially (prompt injections, PII exfiltration) and add layers: input sanitization, tool whitelists, output filters. Finally, revisit the economics regularly: monitor drift, content freshness in RAG, and hardware utilization to keep the TCO advantage intact.

Conclusion

Small Language Models aren’t a consolation prize—they’re a strategic choice. With compression, distillation, RAG, and tool use, SLMs deliver fast, affordable, and private intelligence for the majority of real-world tasks. They thrive where scope is clear, latency matters, and budgets are finite. By pairing a compact model with solid retrieval, strong guardrails, and production-grade MLOps, teams can ship reliable copilots across devices, branches, and clouds. The next wave of AI adoption won’t be defined only by the biggest models, but by the right-sized models embedded everywhere users work. If you want practical gains now—and a platform that scales tomorrow—tiny AI is the big idea to bet on.

FAQ

How do I choose the right SLM size for my use case?

Match complexity to parameters. Start with 3B–8B for chat, summarization, and classification; go smaller (≤3B) for constrained assistants or on-device tasks; step up only if evaluation gaps persist after RAG, prompt tuning, and adapters. Always validate with your own task metrics.

What hardware is sufficient for SLM inference?

For edge/on-device, modern CPUs and NPUs handle 4–8 bit SLMs with short contexts. Small GPUs (laptop/workstation or modest cloud instances) boost throughput for multi-user workloads. Prioritize memory bandwidth, quantization support, and efficient runtimes.

How should I evaluate an SLM before production?

Create a representative test set with gold answers, include tricky negatives, and measure accuracy, latency, and cost. Add policy and safety checks. Run canaries and A/B tests, monitor drift, and maintain a fallback path to a larger model or human review.