RAG vs CAG: Build Fast, Trustworthy LLM Assistants

RAG vs CAG: Retrieval‑Augmented Generation vs Context‑Augmented Generation Explained

“RAG vs CAG” compares two complementary patterns for grounding large language models (LLMs) with reliable information. RAG (Retrieval‑Augmented Generation) retrieves relevant documents at query time—typically via embeddings, vector search, and re‑ranking—then prompts the LLM with excerpts. CAG (Context‑Augmented Generation) assembles a richer, task‑specific context package from multiple sources (databases, APIs, logs, recent conversation state, business rules, and even RAG results) before generating an answer. While RAG is document‑centric and search‑driven, CAG is orchestration‑centric and workflow‑driven. Why does this distinction matter? Because production AI should optimize for trustworthiness, latency, cost, and governance. Understanding when to prefer RAG, CAG, or a hybrid approach helps teams ship assistants, copilots, and chatbots that are fast, accurate, and auditable.

Core Definitions and Mental Models

RAG augments an LLM with external knowledge by retrieving small, relevant slices of text at inference time. It shines when you have large unstructured corpora (wikis, manuals, tickets) and need fresh, cited answers without retraining. The typical pipeline includes chunking, embedding, approximate nearest neighbor (ANN) search, and cross‑encoder re‑ranking, followed by prompt construction with citations.

CAG broadens the idea: instead of relying only on search, it composes context from heterogeneous sources to fit a task plan. A CAG runtime might query relational tables for live metrics, call a pricing API, retrieve the last N conversation turns, apply business policies, and then include a couple of RAG snippets. In other words, CAG focuses on context assembly and tool‑mediated reasoning, not just retrieval. Note: “CAG” is not a formal standard; vendors may label it “Context‑Aware Generation” or “Contextual Augmented Generation,” but the essence is the same—curated, multi‑source context.

Architecture: How RAG and CAG Systems Are Built

RAG architecture is pipeline‑oriented. You maintain an index (vector or hybrid lexical+vector), ingest documents with chunking and metadata, and run a retrieval chain at query time. Enhancements include re‑ranking models, query expansion, citation span extraction, and guardrails. The LLM sees a compact prompt with retrieved evidence, often capped by context length. Think “smart search + summarize.”

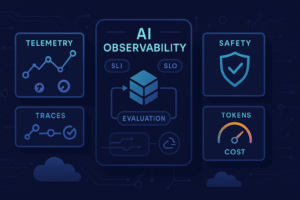

CAG architecture is orchestration‑oriented. A planner or router decides which tools to call—SQL/Graph queries, knowledge‑graph traversals, search APIs, analytics engines, or RAG itself—then composes a canonical context bundle. This might include structured tables, JSON function outputs, policy snippets, user profile attributes, and conversation memory. The prompt can contain sections (facts, constraints, retrieved docs, intermediate calculations) or be fed via function‑calling and tool‑use. Because CAG spans systems of record, it usually demands strong observability, caching, and data governance.

In practice, CAG frequently embeds RAG as one of many context sources. The key difference is that CAG treats retrieval as a building block inside a larger context assembly plan, not the whole system.

When to Choose RAG, CAG, or a Hybrid

Choose RAG when your knowledge is mostly unstructured, the main task is question answering, and freshness matters. Examples include help‑center search, policy Q&A, and product documentation. You’ll benefit from fast iteration: improve chunking, tune embeddings, rerankers, and prompts to steadily raise precision without modifying back‑end systems.

Choose CAG when answers require live or structured data, multi‑step reasoning, or policy enforcement. Think sales copilot pulling CRM, inventory, and pricing, or an internal assistant that must respect role‑based access and regional regulations. CAG is ideal where context depends on user state, ongoing workflows, or tool outputs (forecasts, SQL results, graph traversals).

Hybrid patterns are common. For instance, a CAG planner can call RAG for explanatory background, then fetch current numbers from a warehouse and apply business rules before drafting an email. Or, start with RAG and progressively layer CAG capabilities—conversation memory, calculators, and API calls—as use cases mature.

Implementation Patterns, Tooling, and Best Practices

For RAG success, treat retrieval as a ranking problem. Invest in high‑quality ingestion: metadata, deduplication, smart chunking (semantic boundaries), and hybrid search (BM25 + embeddings). Add a cross‑encoder reranker for relevance, and expand chunks to include context windows that preserve meaning. Enforce citation spans so users can verify sources. Typical tools include vector databases, rerankers, and evaluation frameworks for retrieval metrics.

For CAG, prioritize orchestration and data contracts. Implement a planner/router that selects tools, composes structured context, and enforces policies (PII redaction, entitlements). Use function calling or tool schemas to pass typed outputs. Cache aggressively: response caches, vector caches, and KV caches for model state. Log tool calls with inputs/outputs to ensure traceability. Because CAG touches authoritative systems, integrate observability and cost controls from day one.

Cross‑cutting best practices include:

- Prompt schemas with named sections (facts, constraints, citations) to reduce leakage between evidence and instruction.

- Guardrails: allowed tools, content filters, regex/citation checks, and policy tests.

- Change management: version your indexes, prompts, templates, and tool contracts.

- Human‑in‑the‑loop for high‑stakes actions, with clear UI for sources and confidence signals.

Performance, Cost, and Evaluation

RAG latency is dominated by retrieval (ANN + reranking) and token generation. Control it with ANN parameters, precomputed embeddings, and compact prompts. CAG adds tool latency (SQL, APIs) and planning overhead—offset via caching, parallel tool calls, and selective context packing. Model choice matters: larger models reduce planning errors but raise cost; small models plus good context often outperform bigger models on grounded tasks.

Evaluate with both retrieval and answer metrics. For RAG:

- Retrieval: hit rate, MRR/NDCG, recall@K, time‑to‑first‑token.

- Grounding: citation coverage, evidence overlap, hallucination rate.

For CAG:

- Tool efficacy: plan success rate, tool precision, error propagation.

- Task success: exact match/F1, faithfulness, business KPI lift (deflection, CSAT, revenue).

Use benchmarks like RAGAS/TruLens for groundedness, plus red‑team tests for safety and policy adherence. Track cost per resolved task, not just per token.

Conclusion

RAG and CAG are not rivals—they’re layers in the same grounding stack. RAG excels at turning sprawling text into verifiable answers with citations, while CAG orchestrates multi‑source context—structured data, tools, policies, memory—for actions and decisions that demand more than search. Start with the minimal pattern that meets your needs: RAG for document‑centric Q&A, CAG when live data, workflows, or governance are essential. As your use case matures, blend them: let a CAG planner call RAG for narrative context and tools for facts. Measure relentlessly, cache wisely, and make sources transparent. The result? Faster, safer, and more useful AI that your users can trust.

FAQ

Is CAG just “long‑context prompting” with bigger context windows?

No. Long context helps, but CAG emphasizes selective assembly of relevant, governed context from tools and systems of record. Bigger windows alone don’t solve planning, policy, or freshness.

Can I combine RAG and CAG?

Absolutely. Many production systems use CAG planners that call RAG for background plus SQL/APIs for live numbers, then fuse everything into a single, auditable prompt.

How do I migrate from RAG to CAG?

Keep your RAG pipeline, add a lightweight tool layer (SQL, APIs), introduce a router/planner, and standardize a context schema. Gradually move logic from prompt heuristics to tool‑mediated steps.

Do citations guarantee truth?

Citations improve trust but don’t guarantee correctness. Evaluate faithfulness against sources, and prefer structured queries for authoritative facts when available.

What’s the biggest cost lever?

Context discipline. Retrieve and assemble only what’s necessary, use reranking, parallelize tools, cache aggressively, and right‑size the model for each step.